First article in a series covering scraping data from the web into R; Part II (scraping JSON data) is here, Part III (targeting data using CSS selectors) is here, and we give some suggestions on potential projects here.

There is a massive amount of data available on the web. Some of it is in the form of formatted, downloadable data-sets which are easy to access. But the majority of online data exists as web content such as blogs, news stories and cooking recipes. With formatted files, accessing the data is fairly straightforward; just download the file, unzip if necessary, and import into R.

For “wild” data however, getting the data into an analyzable format is more difficult. Accessing online data of this sort is sometimes referred to as “web scraping”. You will need to download the target page from the Internet and extract the information you need. Two R facilities, readLines() from the base package and getURL() from the RCurl package make this task possible.

readLines

For basic web scraping tasks the readLines() function will usually suffice. readLines() allows simple access to webpage source data on non-secure servers. In its simplest form, readLines() takes a single argument – the URL of the web page to be read:

# r read webpage

web_page <- readLines("http://www.interestingwebsite.com")As an example of a (somewhat) practical use of web scraping, imagine a scenario in which we wanted to know the 10 most frequent posters to the R-help listserve for January 2009. Because the listserve is on a secure site (e.g. it has https:// rather than http:// in the URL) we can’t easily access the live version with readLines(). So for this example, I’ve posted a local copy of the list archives on the this site.

# Get the page's source

web_page <- read.csv("https://www.programmingr.com/jan09rlist.html")

# Pull out the appropriate line

author_lines <- web_page[grep("<I<", web_page)]

# Delete unwanted characters in the lines we pulled out

authors <- gsub("<I<", "", author_lines, fixed = TRUE)

# Present only the ten most frequent posters

author_counts <- sort(table(authors), decreasing = TRUE)

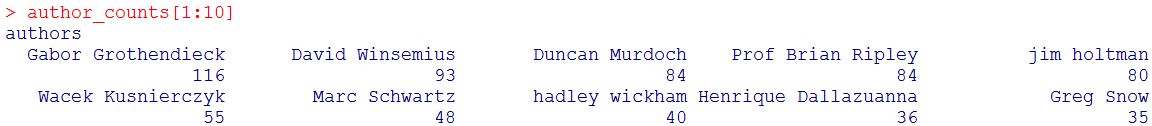

author_counts[1:10]

One note, by itself readLines() can only acquire the data. You’ll need to use grep(), gsub() or equivalents to parse the data and keep what you need. A key challenge in web scraping is finding a way to unpack the data you want from a web page full of other elements.

We can see that Gabor Grothendieck was the most frequent poster to R-help in January 2009.

Looking Under The Hood

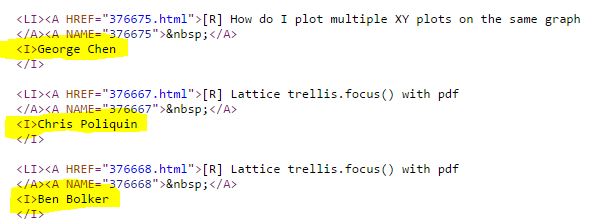

To understand why this example was so straightforward, here is a closer look at the underlying HTML:

Honestly, this is about as user friendly as you can get with HTML data formatted “in the wild”. The data element we are interested in (poster name) is broken out as the main element on its own line. We can quickly and easily grab these lines using grep(). Once we have the lines we’re interested in, we can trim them down by using gsub() to replace the unwanted HTML code.

Incidentally, for those of you who are also web developers, this can be a huge time saver for repetitive tasks. If you’re not working with anything highly sensitive, add a few simple “data dump” pages to your site and use readLines() to pull back the data when you need it. This is great for progress reporting and status updates. Just be sure to keep page design simple – basic, well formatted HTML with minimal fluff.

Looking for A Test Project? Check Out our Big List of Web Scraping Project Ideas!

The RCurl package

To get more advanced http features such as POST capabilities and https access, you’ll need to use the RCurl package. To do web scraping tasks with the RCurl package use the getURL() function. After the data has been acquired via getURL(), it needs to be restructured and parsed. The htmlTreeParse() function from the XML package is tailored for just this task. Using getURL() we can access a secure site so we can use the live site as an example this time.

# rcurl example / rcurl tutorial

# Install the RCurl package if necessary

install.packages("RCurl", dependencies = TRUE)

library("RCurl")

# Install the XML package if necessary

install.packages("XML", dependencies = TRUE)

library("XML")

# geturl function in r - Get first quarter archives

jan09 <- getURL("https://stat.ethz.ch/pipermail/r-help/2009-January/date.html", ssl.verifypeer = FALSE)

# htmltreeparse - get the contents

jan09_parsed <- htmlTreeParse(jan09)

# Continue on similar to above

...For basic web scraping tasks readLines() will be enough and avoids over complicating the task. For more difficult procedures or for tasks requiring other http features getURL() or other functions from the RCurl package may be required.

This was the first in our series on web scraping. Check out one of the later articles to learn more about scraping:

- Using rvest to scrape targeted pieces of HTML (CSS Selectors)

- Using jsonlite to scrap data from AJAX websites

- Scraper Ergo Sum – Suggested projects for going deeper on web scraping

You may also be interested in the following

- Accessing data for R using SPARQL (Semantic Web Queries)

- Using R Animations to spice up your presentations

- Mass producing graphics using scraped data

This “WEBSCRAPING USING READLINES AND RCURL” is really helpful.But,most of the examples are available only for static webpage text extraction.

I am wondering and I cant see any tutorial or blogs for dynamic web page text data extraction because if I want to analyse text mining for 1000 webpages texts its time consuming for collecting the data from multi webpages.

use “rvest” package in R and an extension called”selectorgadget” in Chrome to easily obtain the CSS selector from the website.

Hi all,

when I try to connect on url path,

i see:

Error in file(con, “r”) : cannot open the connection

In addition: Warning message:

In file(con, “r”) :

unable to connect to ‘www.interestingwebsite.com’ on port 80.

Anyone see why?

many thanks

David

Hi i try to do

web_page <- readLines("https://en.wikipedia.org/wiki/Statistics"😉

but error occurred as followed

Error in file(con, "r") : https:// URLs are not supported

however i already install RCurl package

but didnt solve

Hi all, I tried to run the scraping procedure as this article demonstrated. But the result is quite strange:

> web_page author_lines <- web_page[grep("“, web_page)]

> autauthors <- gsub("“, “”, author_lines, fixed = TRUE)

> author_counts author_counts[1:10]

[1] NA NA NA NA NA NA NA NA NA NA

Anyone knows why? Thx

The script went messy after I paste them there. But I found that following exactly the code in that “readLines” section, the whole scraping thing works if you change “read.csv” in the 1st-line into “readLines”.